In this article:

LLM Citation Monitoring: The Signal AI Search Trusts

TL;DR

Direct answer: LLM citation monitoring tracks how AI tools like ChatGPT, Gemini, and Perplexity mention your brand — including how often, how accurately, and how you compare to competitors.

Key Takeaways: • Measure AI-Driven Share of Voice: Calculate your brand's citation percentage across AI responses for your target keywords. • Automate Tracking Across Platforms: Use tools like Nightwatch, Keyword.com, and AirOps to run scheduled prompts across major LLMs. • Optimize Content for LLM Preferences: Align your site with E-E-A-T signals, structured data, FAQ schema, and content freshness that AI models prioritize.

LLM citation monitoring means watching how AI tools like ChatGPT, Gemini, and Perplexity mention your brand. It shows how often you appear, whether descriptions are accurate, and where competitors are winning visibility. As generative search becomes the primary discovery channel, tracking citations is no longer optional — it is the foundation of AI brand strategy. Read on to build a monitoring workflow that protects and grows your brand in generative search.

Why LLM Citation Monitoring Matters for 2026 Marketing

AI search is now a main discovery channel, not a side tool. When people ask ChatGPT or Perplexity for product ideas or market context, the brands that get cited become the default choice. If you are not tracking those mentions, you cannot see your true share of voice in generative search.

LLM citation monitoring gives you control in a space that usually feels hidden. It shows how often you appear, how you are described, and where you are missing. As brands compete for visibility in AI-generated answers, this practice has become a core component of any serious generative engine optimization strategy.

Key reasons to monitor LLM citations in 2026:

- Measure competitive position: Are you the main recommendation or one of many?

- Protect accuracy: Are your pricing, features, and use cases described correctly?

- Find content gaps: Where are models skipping you or linking to competitors instead?

- Support GEO (Generative Engine Optimization): Use the data to shape topics, formats, and sources that models pull from.

"Share of voice measures the percentage of AI answers mentioning your brand versus total answers for target queries."

— Nick Lafferty on AI visibility benchmarks [1]

Done well, this turns AI answers from a black box into a clear signal of how your brand is actually perceived.

Top LLM Citation Tracking Tools for 2026

Choosing the right LLM citation monitoring tools allows teams to understand how often their brand appears in AI answers, how it is framed, and which competitors are gaining visibility. Different tools lean in different directions, so the match matters more than the name.

At a basic level, you want:

- Multi-model coverage (ChatGPT, Gemini, Claude, Perplexity at minimum)

- Automated querying, not manual checks

- Context on how you are mentioned, not just if you are mentioned

Core features to look for:

- Breadth of LLM coverage

- Depth of analysis (sentiment, accuracy, positioning)

- Reporting cadence (real-time alerts vs weekly reports)

- Workflow fit (exports, integrations, and dashboards your team will actually use)

Platforms like GeekyExpert are built specifically for this — tracking how AI answers mention your brand across multiple LLMs and surfacing actionable data. You can run a our research reports to see where your brand stands today.

| Tool | Primary Strengths | Supported LLMs |

|---|---|---|

| Nightwatch | Citation sentiment, prompt research, competitor analysis | ChatGPT, Claude, Gemini, Perplexity, Grok |

| Keyword.com | Brand portrayal tracking, keyword-based trigger analysis | ChatGPT, Gemini, Claude, Perplexity |

| AirOps Insights | Accuracy checks, trend lines, weekly citation tracking | ChatGPT, Perplexity, Gemini, Claude |

| Clearscope | Topic-based AI relevance for existing content inventory | Major engines |

Pricing ranges from accessible plans for smaller teams to custom enterprise tiers with large-scale monitoring and advanced reporting. The best choice is the one that matches your monitoring frequency, depth of insight, and the models that matter most to your audience.

How LLMs Choose Which Sources to Cite

Large language models follow clear patterns when deciding what to cite. They aim to give helpful, accurate answers, so they lean toward sources that look trustworthy and well-structured. This aligns closely with how AEO optimization strategy builds citation authority through E-E-A-T signals.

Key factors that shape LLM citation decisions:

- Authority signals: Domains like Wikipedia, major news outlets, and respected industry sites are often used as factual anchors.

- Content structure: Clear headings, simple paragraphs, and FAQ or product schema (Schema.org) make it easier for models to extract and reuse your information.

- Information freshness: Newer content often wins for fast-moving topics, so regular updates help you stay cite-worthy. [2]

- Community validation: Thoughtful posts on platforms like Reddit, LinkedIn, GitHub, or niche forums can also be picked up as signals of relevance and lived experience.

Core Steps to Build a Citation Monitoring Workflow

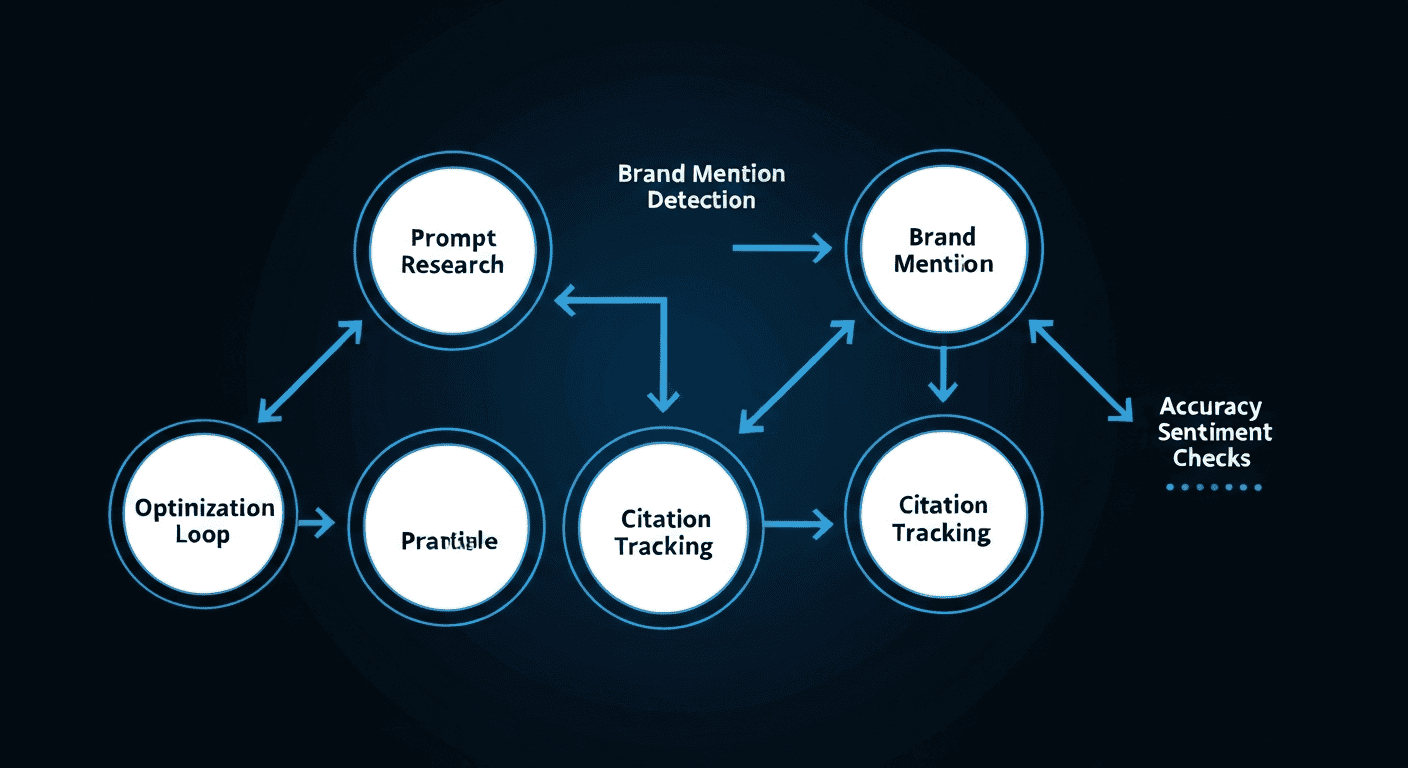

Every solid workflow starts with knowing where you stand. You begin by defining your territory. List the keywords, brand terms, and feature phrases that are tightly connected to your product and category, then group them into topic clusters in your monitoring tool.

For example, a CRM company might group:

- "sales software"

- "customer relationship management"

- Feature terms like "pipeline tracking" or "sales automation"

These clusters form a structured monitoring framework. You are not watching random keywords — you are tracking the conversation space you want to lead.

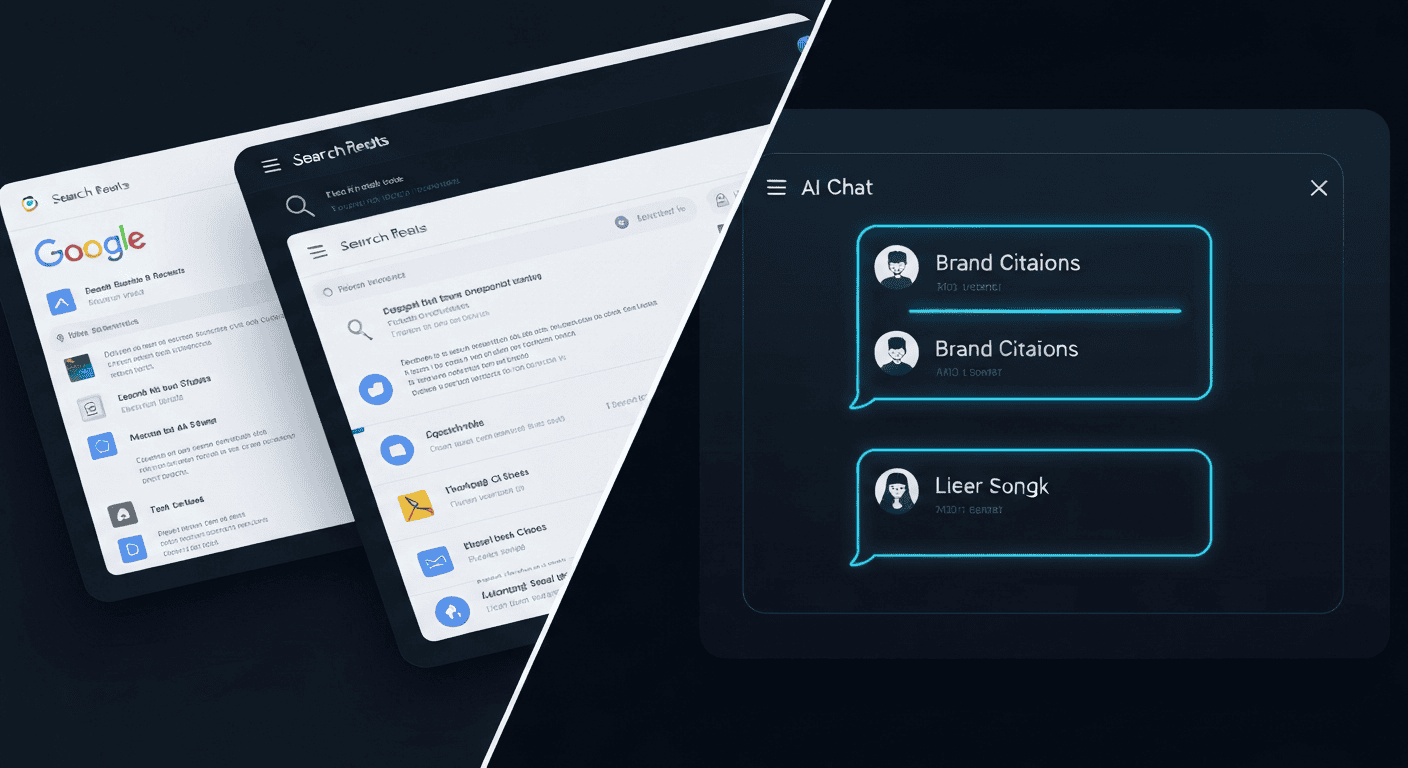

Once topics are clear, you automate the asking. Create realistic prompts that mirror user queries and schedule them to run on major LLMs like ChatGPT, Gemini, Claude, and Perplexity. A weekly cadence is common.

Key automation goals:

- Track how often your brand is cited

- Detect shifts after model updates

- Spot competitor gains in visibility

This turns one-off checks into a consistent dataset, giving you a timeline of your AI visibility without heavy manual work.

Measuring Share of Voice and Turning Insights Into Action

Instead of focusing on traditional search positions, brands need to measure AI share of voice by analyzing how often they appear in LLM answers compared to competitors. This metric connects directly to LLM ranking optimization — the higher your share of voice, the more your content is being selected and trusted.

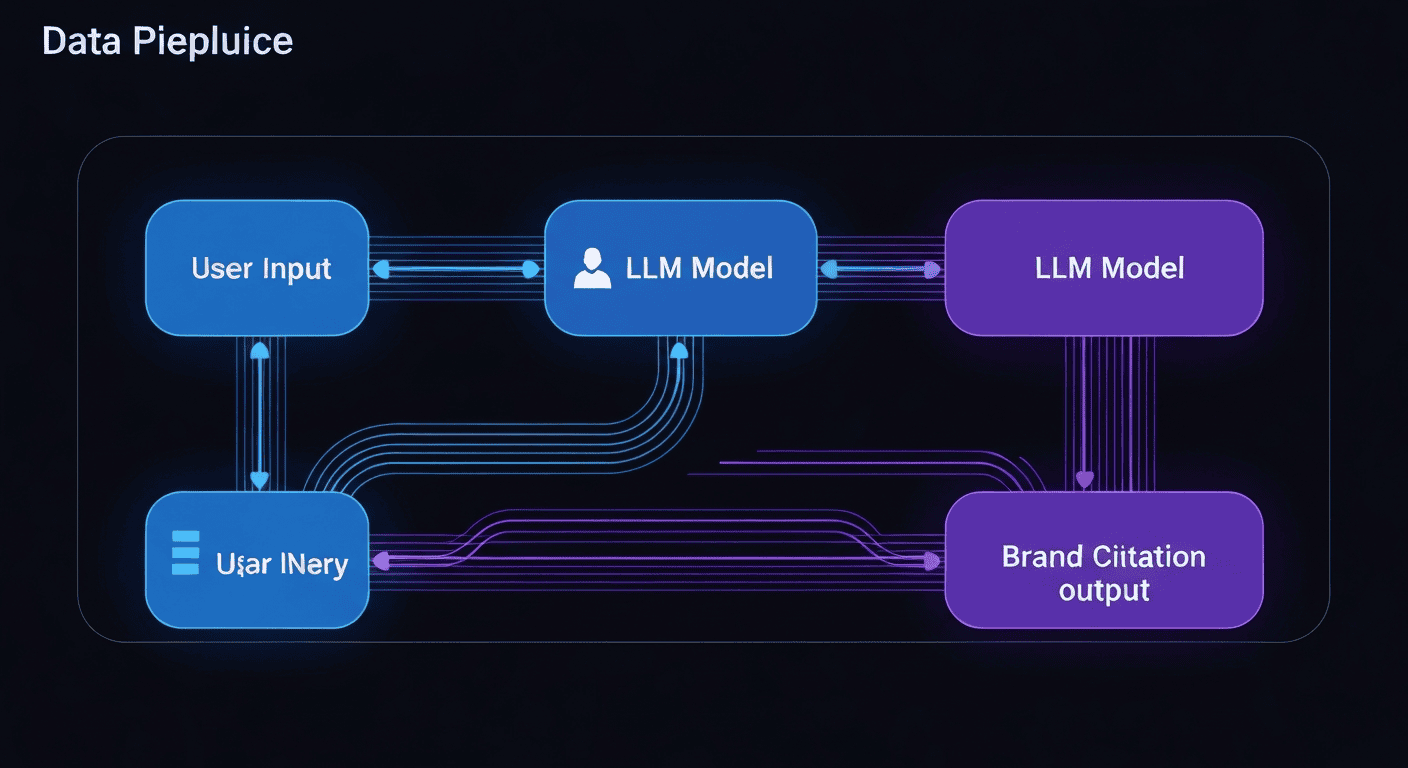

After data collection, you move into analysis. Raw citation counts are helpful, but they are only the starting point. One of the most useful metrics here is Share of Voice (SOV).

Basic SOV formula:

SOV = your brand's citations ÷ total citations for all relevant brands

If your brand appears 150 times in "marketing automation" answers, and total brand mentions in that dataset are 1,500, your SOV is 10%. This gives you a clear, percentage-based view of your presence within AI-generated answers.

To deepen the workflow:

- Analyze sentiment: Is the mention positive, neutral, or slightly negative?

- Check positioning: Are you the main recommendation or just one of several alternatives?

- Identify gaps: Where are you missing versus key competitors or key topics?

Then, connect findings to action:

- Update or expand website content for underperforming topics

- Improve technical SEO so LLMs can parse your site more easily

- Refine seeding and PR strategies to strengthen your authority signals

Tactics to Increase Brand Citation Frequency

Brands that show up more in AI answers usually start with strong SEO basics. Your site needs to be easy for crawlers to read: clean structure, fast load times, and clear internal links. Use specific H1 headings that state the page's main topic, not vague slogans. Add structured data where relevant, especially FAQ schema, since it mirrors the question–answer format LLMs tend to reuse.

Key on-site actions:

- Use clear, topic-focused H1 and subheadings

- Implement FAQ schema for core questions

- Keep technical SEO healthy (speed, sitemaps, crawlability)

Then move beyond your own domain. Share expert content on platforms models often learn from, like LinkedIn articles, niche subreddits, or Q&A sites. You are seeding accurate, high-quality signals in public spaces.

Pair that with regular monitoring:

- Run weekly checks for hallucinations or outdated claims

- Update your site and high-authority profiles with correct, structured details

- Create content that mirrors real AI queries in your niche

Think of it as a loop: seed, monitor, correct, and refine — so models have fewer chances to get your brand wrong. This mirrors the approach outlined in our guide on AI citation tracking tools for building a defensible presence in generative search.

Your Path to AI Visibility

LLM citation monitoring is the foundation of brand visibility in the AI search era. Knowing where you appear, how you are described, and where competitors are winning gives you the data to act — not guess.

The brands that win in generative search are the ones that treat citation monitoring as an ongoing system, not a one-time audit. They seed accurate signals, check results weekly, and use what they find to improve both content and authority. That loop compounds over time.

GeekyExpert supports B2B and SaaS leaders with practical research for smarter decisions. Explore AI visibility, citation tracking, and LLM optimization to strengthen long-term generative search authority and credibility. If you want to start monitoring your brand's AI citations right now, GeekyExpert gives you a direct view of how ChatGPT, Gemini, and Perplexity are talking about your brand — run a our research reports and see the gaps.

References

Related Articles

Frequently Asked Questions

What is LLM citation tracking and how does it improve AI visibility monitoring?

LLM citation tracking follows how large language models cite or mention brand content across AI platforms. It supports AI visibility monitoring across ChatGPT citations, Perplexity tracking, Gemini brand references, Claude visibility, and Google AI Overviews. Teams review citation count metrics, brand mention detection, and brand portrayal accuracy to confirm E-E-A-T signals, source credibility, and multi-platform coverage. This data directly informs GEO and AEO strategies.

How do GEO strategies help brands appear correctly in generative engines?

Generative engine optimization uses GEO strategies to guide how models understand and reference content. It includes structured data citations, schema.org markup, FAQ schema, clear H1 headings, and optimized meta descriptions. These steps support LLM indexing optimization, recency optimization, authority domain ranking, and content gap discovery for better AI search volume alignment. Brands that align content with GEO principles see measurable gains in citation frequency.

How can teams measure share of voice and competitor positioning in LLMs?

Teams use citation frequency analysis, share of voice (SOV) formulas, and visibility percentage metrics to compare performance. SOV = your brand citations ÷ total citations for all relevant brands across monitored queries. Competitor benchmarking reviews competitor positioning in LLMs, ranking deviation, and gap analysis. Weekly cadence tracking shows AI visibility rank changes and brand marketing directional shifts over time.

What methods detect incorrect or missing citations from AI answers?

Monitoring uses explicit hyperlink tracking, implicit reference detection, and text mention extraction to find brand appearances. Paraphrase match scoring and semantic similarity checks help surface hidden or indirect mentions. Source credibility checks, accuracy audits, and hallucination detection reduce errors in how AI describes your brand. Regular weekly checks combined with structured content updates minimize the risk of outdated or wrong information persisting in AI answers.

How does prompt testing automation support ongoing citation monitoring?

Prompt testing automation runs scheduled queries across LLM platforms using realistic user prompts that mirror how your target audience searches. It maps prompt clustering gaps and identifies where your brand is absent from the AI response universe. Results feed content strategy updates, authority-building campaigns, and AI content recommendations. Real-time ranking updates and web visibility scanning keep insights current between manual review cycles.

About Geeky Expert

Geeky Expert is a leading provider of research and insights, dedicated to helping businesses make informed decisions through comprehensive analysis.